Continue…

Part Three: The Internet Network

We’ve made it from your connection to Edmonton, now the data is outside of the MCSnet network and has to get to the different servers around the world connecting to the Internet.

After the radio on your roof, the main parts of the MCSnet network are the access points (APs) on the towers that the subscribers connect to, tower to tower feeds, and the connection to the fiber for the region. The best way to understand how the performance is affected through this network is through a roadway analogy, so let’s dig in and see if we can figure out this wireless ISP thing.

To illustrate some of the difficulties and obstacles associated with providing internet, we will use a highway analogy. Imagine a busy two lane highway full of cars going from point A to point B, with all of the cars travelling at the speed limit. If we can’t increase the speed limit, how can we get more cars to point B in less time? By increasing the number of lanes. With the internet, the amount of cars that can get to point B is called the bandwidth, which is a term that directly illustrates where the limitation is. The bandwidth on our highway would be the number of lanes for the traffic to share, and with wireless it’s the number of frequencies, which is the width of the wireless band it uses (e.g. 20 MHz bandwidth). If you read through the Wi-Fi section, you won’t be surprised that there are only a few slices of frequencies available to use on wireless, so it’s not possible to keep adding lanes to this highway.

Why can’t we increase the speed limit of the highway? After all, 16 lanes still won’t get many cars to point B if they are only going 3 km/hr. The reason is that in our analogy, the speed of the cars is the speed of the data, the data is at a real speed limit, which is the speed of light for most of the route. The time it takes to get from point A to point B is called the latency (latency can be measured by a ping test, where the time for the data to get there and back is measured), and is mainly determined by the distance it needs to travel.

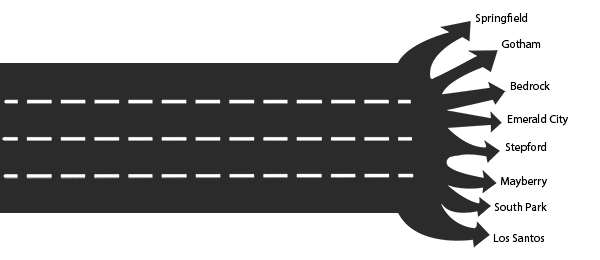

Here we see our highway, point B is on the right, where it feeds multiple fictional cities.

If your connection was right at point B off of the highway with no other cities to share with, that would be called a dedicated connection, which should be more consistent can be much more expensive to operate. Instead, the vast majority of internet connections share a feed off of point B like in the image above. When the internet slows down due to congestion, it’s not because the highway is moving slower, it’s just that the highway can’t hold enough cars to keep all of the feeds off of point B fed at the same time, it’s rush hour.

This was a more dominant model when the internet first started. In our highway analogy, each city would have a guaranteed rate of cars coming in; the idea is that each city’s traffic rate is reserved so that it’s available when needed. We have 4 lanes and 8 cities, so each city gets about half a lane worth of traffic. This solves the slowdown issue, as busy as any city may be, it can’t affect the rate of cars to the others. The big downside of this is that the city of Bedrock can no longer get it’s double wide shipment of brontosaurus burgers in, since they are only allotted half a lane of traffic, which is a shame since the highway is actually only busy for a few hours in the evening.

The same holds true for the internet, it’s mostly only busy from about 8 pm to midnight, so while a guaranteed rate is nice, it leads to slower top speeds (“double lane downloads” like HD video not possible), and the network is barely utilized for a large portion of the day. It’s also more expensive because it doesn’t allow for more cities/subscribers to split the cost and bring costs down. This was the early age of ‘broadband’ internet, where a 1.5 Mbps T1 line was more than $1,000 a month and it was essentially business only; everyone else was still rocking dialup with averaged about 0.024-0.048 Mbps rates. It’s important to note that the majority of these concerns only apply to wireless internet, the cable and fiber connections in the more urban centers have a larger amount of bandwidth available to them.

Let’s scrap the reservation system, if the highway is free enough, then Bedrock can get their double lane shipments in at the low times of the day, and if it’s during the busy time, then there won’t be any room for them and the problem takes care of itself. Traditional internet use has only been for short bursts, so this sharing system works very well to get the most speeds for everyone. If the highway is open and a city can use all four lanes to get their cars in within 1 second (instead of 8 seconds with the reservation system), then let them have at it, and now the highway is free faster for another city to do the same.

With the traditional internet it works exactly this way, one subscriber grabs a webpage in 0.1 seconds using the full pipe, then a couple of moments later another subscriber downloads some emails in 0.15 seconds, and things are just zipping along! If we watch the traffic on our highway during the busy time, we find that at any moment, that for every 8 cities connected, there is about 1 cities worth of traffic on the highway, so we can connect 8 times as many cities to share the cost.

Because of the long sleep to short burst nature of internet activity, it became possible to share the cost of an expensive highway among many subscribers so that any one subscriber could get up to 5 Mbps for under $100–this is also why almost all ISPs list the speeds as ‘up to’. Our highway is now accessible for all, and it feeds much more than the original 8 cities. The number of connections off of the highway changes regularly, so it’s very difficult to predict the average or lowest rates for any individual city at a certain time. The 4 lanes are available through most of the day for each city, and we know it will be less at certain times.

If a competing highway advertises their 3 lane highway as up to 3 lane speeds, it forces other providers to use the same simple metric, and thus the ‘up to’ arms race is created. Accessibility is way up, and costs are way down, but what remains is finding a way to prevent one hoarding city from filling the highway constantly; we want all cities to have a chance at 4 lane speeds, so it’s not fair if Springfield is using all 4 lanes constantly for some abnormal activity.

We need a way to mitigate the effects of a tiny amount of heavy users from clogging the lanes, if we don’t have a way to manage this, then the speeds will be slow for everyone as free lanes are never available. Throttling used to be a popular way to prevent a single connection from clogging the highway continuously. It simply means allowing all connections free access to the whole highway, but if a connection exceeds a certain amount for a set period of time, then limit them down to their portion of a lane to make it more fair for the rest of the connections sharing with them.

Bandwidth hogs only make up around 1-2% of users, so most connections shouldn’t notice it? Throttling ran into a reputation problem, mostly due to abuse by providers using it too aggressively, or using it to hamper or prevent the use of certain Internet services. The big grief with a throttled connection is that the throttling hit you right when you needed to use the internet for a download or an update, so it made the connection very unpredictable. Throttling became a faux pas because of its misuse and either disappeared or was weaseled into some fine print.

What if we let the heavy users throttle themselves? We give everyone a set amount for the month, and once they use that allowance up, they are done. This treats all parts of the internet equally, so there is no making one part go slow to make another go fast, and non-heavy users don’t have to worry about it and can avoid getting throttled during the one time they do anything intensive on the internet. This is the system that MCSnet uses for most of its packages, but we do offer unlimited traffic at an extra cost. It doesn’t have the immediate reactive fix that throttling does, so there can be times when the heavy users are going hard making things slow, but there’s at least some assurance that they will not be able to do it constantly.

These are the four main frequencies used in most fixed wireless internet feeds like MCSnet. This is different from the wireless signals that the cell providers use, who have a much wider amount of frequencies available. The 5 and 3.65 GHz bands are licensed bands that we have to pay to access. These higher frequencies have a smaller wavelength, so it is more sensitive to shooting through obstacles compared to lower frequency radios. The 900 MHz band offers the most penetration through trees by far.

The slower top rate on 900 MHz is due to the tiny size of the band available to use. To make a comparison with our highway analogy, if the 900 MHz band is a 2 lane highway, the other bands would have a 5 lane highway or more. It’s such a letdown that the strongest band at getting connections is the slowest. The tiny size also contributes to interference issues, as there is little room for multiple providers to co-exist.

If your location is too heavily treed, then 900 MHz may be required to get any connection, unless you have a TV tower to allow us to mount the radio at your location with enough elevation to shoot through the tops of the trees. There is currently no OFDM technology option on the 900 band at this time, but we hope to see some in the next couple of years.

In simpler terms, wireless technology uses the aspects of the radio waves (amplitude, phase angle, etc.) to translate to the 1s and 0s for data, this is called modulation. Covering how the amplitude and phase angle is modulated is beyond the scope of this page, but if you are interested and technically minded, there is a good run-down of it in this Anandtech article here. To put is simply, it’s just a way to get more lanes in our highway in the same frequency space. To get an OFDM connection, we first have to have OFDM access points (APs) on a nearby tower that you can connect to, and have a strong enough signal to get a connection to it. OFDM is available on all bands except for the one that most needs it, which is the 900 MHz band. The decision to leave 900 MHz behind was by the main device manufacturer, Cambium (they purchased from Motorola), who may have identified the tiny size of the band and the likelihood of interference in heavily populated areas as reasons to pass on it.

After you get connected to the tower site, we still have to get from the tower to the rest of the internet, which is another area where the speeds can still vary. Most of the tower feeds are wireless, they have a wireless feed from another tower, to eventually get to the fiber optic cable that feeds the region. With these wireless tower links, we are dealing with the same limited lane highway problem, it’s just on a grander scale and is more predictable. Since the highway feeds a single point (referred to as point to point), the expected throughput can be forecasted.

Most of these links in the MCSnet network are already on the new OFDM technology to allow for faster rates, but they can still get saturated and affect the performance. On any given weekday, it’s likely that one part or another is being upgraded somewhere in our network; it’s never-ending. After the tower feed, our network connects to the fiber that feeds the region (some towers are fed directly by fiber), which offers an incredible amount of bandwidth. This fiber has traditionally been through the Alberta SuperNet, which is a good example of how even fibre can slow down if not properly maintained, but over the years we have created our own fiber network. The fibers converge at Edmonton where they connect to the rest of the internet.

We’ve made it from your connection to Edmonton, now the data is outside of the MCSnet network and has to get to the different servers around the world connecting to the Internet.

Learn all about the latest tech, get helpful internet tips and hear stories from the talented people at MCSnet.

Have questions about your internet? Need to troubleshoot a connection issue? We’re happy to help. Our team will respond within one business day.

Connect your rural home now

Thanks for visiting! We hope that you have found what you needed on our website. If not, please feel free to contact us using this form and we will get back to you within one business day. Tech support hours are 6:00 AM to 10:00 PM, 7 days a week.